Google Integrates Personal Intelligence with Nano Banana Image Generation to Transform AI Creativity through User Data

The landscape of generative artificial intelligence is undergoing a fundamental shift from generalized outputs toward hyper-personalization. Google has officially announced the integration of "Personal Intelligence" within its Nano Banana image generation framework, a move that effectively bridges the gap between a user’s private digital life and the creative potential of AI. By allowing the Gemini ecosystem to access a user’s Google Photos library, Gmail correspondence, and Google Docs, the company aims to eliminate the "prompting hurdle"—the difficulty many users face when trying to describe a specific vision in text. This new functionality enables the AI to "fill in the blanks" of a user’s imagination by leveraging existing context from their personal data, marking a significant milestone in the evolution of multimodal AI.

The Evolution of Image Generation: From Prompts to Context

For the past several years, the primary challenge in AI-driven creativity has been the reliance on complex prompt engineering. To achieve a specific aesthetic or include particular individuals in a generated image, users were previously required to write exhaustive descriptions, often spanning multiple paragraphs. Even then, the results frequently missed the mark, failing to capture the nuance of a person’s likeness or the specific "vibe" of a user’s preference.

Google’s introduction of Personal Intelligence via the Nano Banana 2 model represents a departure from this text-heavy paradigm. Instead of starting from a blank slate, the AI now operates with a foundational understanding of the user. By indexing the user’s interests, frequently visited locations, and the visual styles present in their Google Photos library, Gemini can generate images that are inherently tailored to the individual. This "context-aware" generation allows for much shorter, more intuitive commands. For example, a user no longer needs to describe their family’s appearance; they can simply ask for a "claymation-style portrait of my family at the beach," and the AI will reference tagged individuals in Google Photos to produce an accurate representation.

Technical Architecture: The Nano Banana 2 Model

At the heart of this update is the Nano Banana 2 model, a sophisticated iteration of Google’s lightweight, efficient AI architecture. While Google’s larger models, such as Gemini Ultra, are designed for massive computational tasks in the cloud, the "Nano" series is optimized for speed and integration across both mobile and desktop environments.

Nano Banana 2 introduces several key technical improvements over its predecessor:

- Enhanced Semantic Mapping: The model has a better grasp of the relationship between personal nouns (e.g., "my dog," "my car") and the visual data stored in a user’s account.

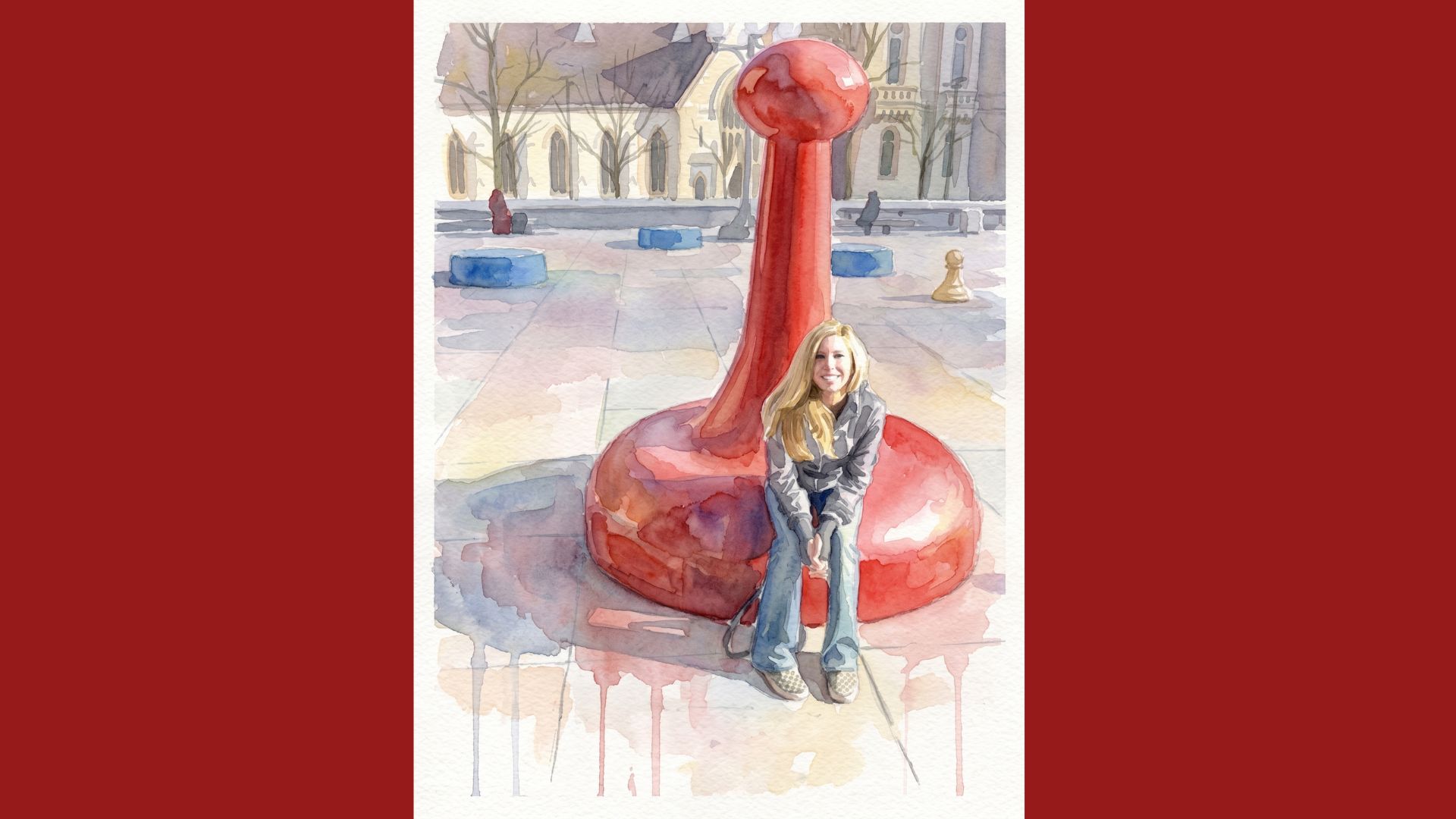

- Visual Consistency: One of the most difficult tasks in AI generation is maintaining a consistent likeness of a real person across different artistic styles. Nano Banana 2 utilizes advanced diffusion techniques to map the facial features and characteristics of people in a user’s Google Photos library onto generated subjects, whether the desired output is a 3D render, a watercolor painting, or a cinematic photograph.

- Improved Text Rendering: Addressing a common complaint in generative AI, the new model features upgraded capabilities for rendering legible text within images, making it more useful for creating personalized invitations, cards, or social media content.

Chronology of Google’s AI Integration

The path to Personal Intelligence has been a multi-year journey for Google, characterized by a steady transition from search-centric tools to proactive AI assistants.

- May 2023: At the Google I/O conference, the company rebranded its AI efforts under the Gemini umbrella, signaling a move toward a unified, multimodal ecosystem.

- Late 2023: Google introduced basic integration between Gemini and Workspace, allowing the AI to summarize emails and organize Drive files.

- Early 2024: The first iteration of the Nano Banana model was quietly tested, focusing on on-device processing and basic image editing tools like "Magic Editor."

- Mid-2024: Google expanded the "Help Me Edit" tool in Google Photos, setting the stage for more advanced generative features.

- Present: The rollout of Nano Banana 2 with Personal Intelligence represents the culmination of these efforts, moving beyond mere editing and into the realm of fully personalized creation.

Deep Integration with Google Photos and Workspace

The most transformative aspect of this update is the synergy between Gemini and Google Photos. Over the last decade, Google Photos has used machine learning to allow users to label people and pets. Personal Intelligence now taps into these existing labels. When a user grants permission, Gemini treats these labels as unique tokens.

This allows for a level of personalization previously unavailable in mainstream AI tools. Beyond family portraits, users can experiment with diverse artistic mediums, such as:

- Synthwave or Cyberpunk: Placing oneself in a futuristic neon cityscape.

- Classic Oil Painting: Reimagining a vacation photo in the style of the Old Masters.

- Instructional Visuals: Creating custom diagrams or "how-to" images that feature the user’s actual tools or workspace environment.

Furthermore, the integration extends to Gmail and Google Docs. If a user is drafting a story in Docs about a specific fictional setting they’ve described in detail, Gemini can reference that document to generate an accompanying illustration that matches the written description without the user needing to copy and paste text into a prompt box.

Privacy, Security, and the "Opt-In" Mandate

The prospect of an AI "reading" a private photo library and scanning personal emails inevitably raises significant privacy concerns. Industry analysts have pointed out that for many users, the photo library is one of the most sensitive repositories of personal data.

In response, Google has adopted a strict "opt-in" model for Personal Intelligence. The feature is not enabled by default. Users must manually grant Gemini permission to access specific data silos, such as Google Photos or Gmail. Furthermore, Google has outlined several layers of protection:

- Local Processing vs. Cloud Processing: While some generation occurs in the cloud for high-fidelity results, the "Nano" model is designed to handle a portion of the contextual processing on the device itself, minimizing the amount of data transmitted to external servers.

- User Control: Users can toggle Personal Intelligence off at any time. They also have the ability to exclude specific people, dates, or albums from the AI’s "memory."

- Safety Filters: Google has implemented rigorous filters to prevent the generation of "Deepfake" content that could be used for malicious purposes. The system is designed to recognize and block requests that involve non-consensual imagery or sensitive public figures.

Market Context and Competitive Landscape

Google’s move comes at a time of intense competition in the AI sector. Apple recently announced "Apple Intelligence," which similarly promises to use "personal context" to assist users across iOS and macOS. Meanwhile, OpenAI’s DALL-E 3 and Midjourney continue to lead in raw artistic quality but lack the deep integration into a user’s personal productivity suite that Google provides.

By leveraging its dominance in the cloud storage and email markets, Google is positioning Gemini as a more "useful" AI than its competitors. While Midjourney can create a beautiful image of a generic person, Google can create a beautiful image of you. This utility is expected to drive subscriptions for Google’s premium tiers, as the feature is currently exclusive to Google Plus, Pro, and Ultra subscribers.

Broader Implications and Future Outlook

The implications of Personal Intelligence extend beyond simple fun and creativity. For small business owners, this technology could allow for the creation of marketing materials featuring their actual products and staff without the need for expensive photoshoots. For families, it offers a new way to preserve and reimagine memories, turning a standard smartphone photo into a piece of digital art.

As the feature rolls out to mobile users in the United States, Google has confirmed plans to bring these personalized tools to the Chrome desktop browser in the near future. This expansion will likely include further integration with Google Workspace, potentially allowing for real-time image generation within Google Slides presentations based on the slide’s content.

In conclusion, Google’s integration of Personal Intelligence with Nano Banana 2 represents a shift toward an "invisible" AI—one that understands the user so well that the need for complex interaction disappears. While the privacy trade-offs will remain a point of discussion, the technological achievement of creating a creative partner that truly "knows" its user is a landmark moment in the digital age. As AI continues to become more entwined with our personal data, the boundary between our digital archives and our creative futures will continue to blur, offering a glimpse into a world where technology does not just assist the imagination, but actively participates in it.